The telling details of history

Since my big project, A Poetics of Editing, went public two years ago any time snatched for research has been devoted to a story from the publishing history of the 1950s. This time the output is an article, not a whole book, but in many ways it has been just as absorbing and has inspired a desire to develop the tale for a wider public.

The article, in the journal Logos, is called ‘History with Feeling: The case of Macmillan New York’. This company, once one of the biggest publishers in the US, now exists only in scraps of intellectual property. The inciting incident is the sale of stock in 1951 by the majority shareholder, the London parent Macmillan & Co Ltd – an action taken only reluctantly, at the urging of the New York company’s principal, George P. Brett Jr.

A stockmarket deal might not sound glamorous but behind the event one finds passionate stories, set against historic events on the world stage at the precise moment when global power shifted from one country to another. Brett’s aim was to make the company ‘wholly American’ – to avoid disapproval from isolationist US readers but also, one ends up suspecting, to express national antipathies of his own and resolve an unacknowledged sense of shame.

What was the fascination that sustained my long hours in the archives? Just as the key actors in this historical drama had a mix of motives, so did I. The correspondence evoked a time that profoundly shaped the world into which I was born, and settings – the cities of New York and London – that have both been my home. The cultural antipathies also had resonance for someone who has spent her whole life negotiating prejudices on both sides of the Atlantic.

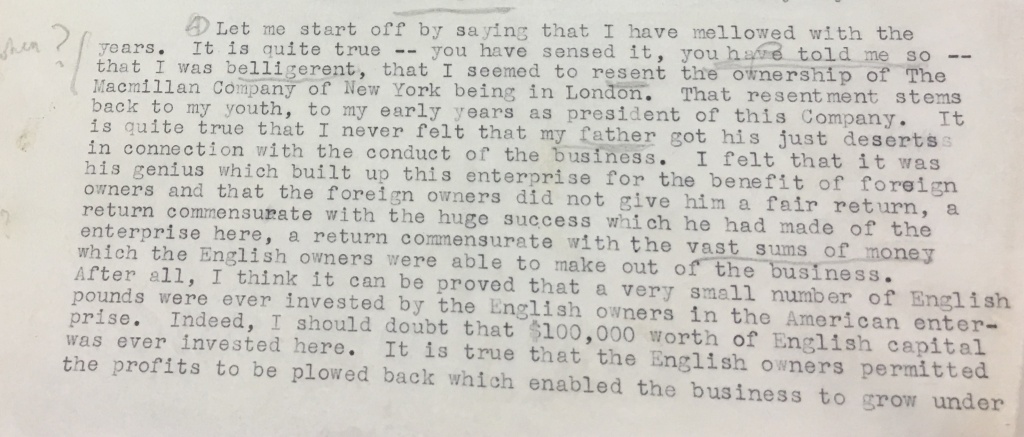

The fascination also lies in the sheer joy of history as a method. Structural analysis is fine, but we need something more. The telling detail about a moment in time and space that leads to specific decisions. The voices that ring out from letters – like the one pictured above from Brett to his London counterpart Daniel Macmillan* – which are purposive but also full of unspoken meanings. What is going on in their world for the correspondents to assume that a particular type of persuasion was needed? We engage afresh in the rhetorical exercise, at our own new juncture in time and space.

* Photo © Macmillan Publishers

A Poetics of Editing: out in autumn 2018

I have been absent from Oddfish for a couple of years, and here is the main reason why – a book long in the making which explores editing fully as a practice in its own right. It proposes the concept of the ‘ideal editor’, to work alongside that of the ideal reader, and the development of a new field of Editing Studies. And it also defines a new materialist poetics that invites a comparative, multi-frame approach. Some kind comments from others:

I have been absent from Oddfish for a couple of years, and here is the main reason why – a book long in the making which explores editing fully as a practice in its own right. It proposes the concept of the ‘ideal editor’, to work alongside that of the ideal reader, and the development of a new field of Editing Studies. And it also defines a new materialist poetics that invites a comparative, multi-frame approach. Some kind comments from others:

‘Like Gibson’s ‘affordance’, Greenberg’s idea of ‘editing’ is used in suggestively larger social, cultural, personal, phenomenological, and other ways. It is a rich framework for seeing the world.’ Alan Liu, Distinguished Professor of English at the University of California, Santa Barbara

‘A rigorous – and long overdue – analysis of editing as decision-making, wherever practiced. Greenberg draws on the insights of neuroscience, history, philosophy, literary theory, and the lived experience of writers and editors to offer an original, thought-provoking study.’ Beth Luey, past president SHARP.

‘In this powerful meditation, Greenberg explores books, writing and publishing from an original and critically important angle.’ Michael Bhaskar, publishing director at Canelo and author of Curation and The Content Machine.

Greenberg, Susan L. 2018. A Poetics of Editing. Palgrave Macmillan.

E-book: ISBN 978-3-319-92246-1

Softback: ISBN 978-3-030-06392-4

Hardback: ISBN 978-3-319-92245-4

The PhD supervisor as editor

When I made the switch from editor to academic, about 12 years ago, doing doctoral research on that former profession was a way of connecting two different lives. Now the shoe is on other foot, and I am training to be a PhD supervisor myself.

So when we were asked to check the pedagogical literature, it is not surprising that my interest was caught by an article on “The supervisor as editor”. [1]

The supervisor fills many roles, but for the field of Creative Writing, the editorial role comes high on the list. In Creative Writing, both self-editing and peer-group workshopping are important, and tutors commonly tell their students they are acting as their editor. This is partly to model professional relationships in publishing, by creating a learning ‘practicum’. But it is also because a tutor’s role is to give feedback on the substantive subject, and in Creative Writing the substantive subject is writing.

At the same time, for both professional and pedagogical reasons it is important to maintain boundaries; style and content are hard to separate, and authors/students need room to interpret any feedback and make the final text their own.

All this means that when it comes to PhDs in Creative Writing, thinking about ‘the supervisor as editor’ is more or less obvious. What may be less obvious, however, is the extent to which the ‘editor’ role might have wider relevance to other fields.

Writing is hard and most people need help with even the most formulaic forms. If that help is not forthcoming from the publisher or institution, writers can opt to hire professional editorial help.

The article’s author, Nigel Krauth, draws attention to the importance of providing editorial help for all doctoral students, and recognises the full range of the editing role; it is not just the critical correction of mistakes, or surface treatment of style, but also a developmental and structural thought process.

He asks, however, how much editing should a supervisor do? And when does the edited thesis stop being the candidate’s work and becomes a collaboration? If research students are under increasing pressure to publish before completion, does the supervisor end up acting as a commercial editor as well? It is important to explore the academic implications of these new demands, says Krauth:

The pressure on creative writing doctoral supervisors to give editorial assistance for both academic and creative components of the work – in the latter especially, replicating the role of a commercial publisher’s editor – evokes the situation where the school takes on a role similar to that of publisher. But also, the supervisor holds a quality control responsibility like that of the commercial editor, ensuring that outputs are at a requisite standard.

I will expand here by explaining that the potential clash of interests between academic and commercial editing is a particular conundrum for Creative Writing work, which typically goes to a trade publisher rather than the academic press.

I think it is good to recognise the potential for a clash, as long as one underlines the prior claim of doctoral criteria. The supervisor’s job is to help the student meet the demands set for successful completion of a PhD. Research criteria require a thesis to be ‘publishable’; but in this context, it means being published for an audience of two to four people, the participants of a viva.

At the same time, the constraints of a doctoral thesis can be creative, as the freedom of the doctoral ‘practicum’ offers the chance to make non-commercial choices. The demands made by publishers are important for the version which goes to a wider readership, but during the doctoral process, for the doctoral ‘edition’, they do not take precedence. In any event, one cannot assume a unified ‘trade’ view in opposition to a unified academic view: within each group, different editors or supervisors will have a different response to a text.

One can also think about the type of editor that the supervisor should model. Krauth says that supervisors ‘can greatly assist their candidates by being nurturing editors’ (emphasis added). But the pedagogical literature tends to recommend allowing for a range of supervision styles, to suit the particular needs of each project. It is about getting the right match, rather than one or another style being ‘right’. [2]

A further thought, to my mind, is that, yes: writing is hard. But editing is hard too! Not all academics are good at writing, and even professional writers in the Academy are not necessarily strong in editing. If supervisors are to provide editing help, one can do more to train them in this role. One can also do more to recognise the extensive editing work done by academics in many disciplines – for example, editing a collection of essays or chapters – which is not recognised in the REF.

References

[1] Krauth, Nigel 2009. “The supervisor as editor”, in TEXT Vol 13 No 2, October

[2] Deuchar, R. 2008. “Facilitator, director or critical friend?: contradiction and congruence in doctoral supervision styles”, in Teaching in Higher Education (13)4: 489-500

Lee, Anne and Rowena Murray. 2015. “Supervising writing: helping postgraduate students develop as researchers”, in Innovations in Education and Teaching International, 52:5, 558-570, DOI: 10.1080/14703297.2013.866329

‘Editors talk about editing’ – the back story

This collection of interviews with editors started years ago as part of a PhD thesis in Publishing, from UCL’s Department of Information Studies.

This collection of interviews with editors started years ago as part of a PhD thesis in Publishing, from UCL’s Department of Information Studies.

The book version provides readers with a full text of each conversation, a few working conclusions about editing, a caveat about social constructivism, and a reflection on the special character of the interview, a very performative form of research.

Work on a second book drawn from the rest of the thesis – a theoretical and historical analysis of editing up to the digital present – starts in earnest this summer.

Because the whole project sets out to be comparative, the book deliberately juxtaposes people in very different kinds of publishing, to acknowledge not only the familiar ways in which they are different, but also how they might be the same, and to understand recognisable patterns in their practices, values and concerns.

When I approached people, it was not a sure thing that they would say yes. They are high flyers, leaders of their field; would they even want to talk? In fact, they all agreed, and after a few meetings, I realised why. They love their work, but it is a kind of work that does not often get attention in its own right. And here was someone who wanted to talk with them about just that thing.

So they said yes. But it was still an enormous amount of work: to agree on terms; draw up the questions; meet and talk, both of us thinking on our feet; transcribe and edit; consult; analyse; and edit again.

By the end, I joked in the introduction about ‘the almost comic meta-ness of a text about editing. The core subject of the book is the making of a text, and it is being explored through the making of a text. The transcripts are edited by me, and then reviewed by the interviewees, who are editors – and so they are reviewing the editing done to a discussion about editing. It is a game of three-dimensional chess.’

Look out for extracts from the book, published here over the next few weeks.

Open for (PhD supervision) business

Now that my own PhD is done and dusted, I am looking to take on doctoral students at the University of Roehampton, where I have worked for the last 10 years. Besides supportive supervision, I can offer expertise in two areas.

The first taps into specialised knowledge and experience about contemporary publishing and editing, including digital media, and the way in which editorial roles change over time. This connects with book history, information studies, the digital humanities, journalism, translation studies, and media studies.

The second draws on long years of teaching and practice in writing narrative nonfiction. This is a broad umbrella term for a genre sometimes identified as creative nonfiction, literary journalism, travel literature or life writing (memoir and biography).

In both cases I can offer an overview of the relationship between practice and theory, and the potential of poetics and rhetoric to dialogue with other traditions.

If you are contemplating a PhD and looking for someone with this range of interests, please get in touch.

One good way of starting down the doctoral road is through the Techne AHRC Doctoral Training Partnership, a consortium of seven higher education institutions including Roehampton. This will award approximately 35 PhD studentships per year, until 2019, complete with stipend and fee waiver. The awards are very competitive, but all applications are considered.

The focus of Techne (Greek for ‘craft’) is on interdisciplinarity and career potential both within and beyond academia. The programme includes input and placement opportunities provided by Techne’s 13 partner organisations in the cultural sector.

The deadline for the current round is Friday January 9, 2015. Interviews are held February 16 to 23, 2015. The studentship start on October 1, 2015. For further information on how to apply, please go to the University of Roehampton’s Graduate Office web pages.

Neither true nor beautiful

A project I have worked on for more than two years has just gone public, and I am enjoying the pleasure that always comes with publication.

A special issue of Journalism: Theory, Practice, Criticism (July 2014; Vol. 15, No. 5) looks at literary journalism, in particular the insights it brings to ethical issues.

I was honoured and happy when Dr Julie Wheelwright invited me to join her in a rewarding stint as guest editors of the long-established journal. The thinking behind the issue, and a summary of the different contributions are provided in the introduction ‘Literary journalism: ethics in three dimensions’.

The experience has fed not just one but two of my obsessions – editing, and the writing of narrative nonfiction.

It has reminded me that the reward of editing comes not just from putting texts out into the world, but also from presenting work in a way that helps it to join a conversation and advance the debate. A text is not just a text: it is changed by the forms and conventions of publishing. A journal article is different from a blog post; an article conceived as part of a larger set is different from a random collection.

It also gave me a chance to think even more deeply about the nature and practice of nonfiction writing, which I teach at the University of Roehampton. In the wider culture, narrative or ‘literary’ nonfiction can be presented as an oddity; it is ‘not’ fiction and ‘like’ fiction. Fiction is the reference point for artistic writing, and any attempt to include narrative nonfiction is perceived as a distortion of its core principles.

My own argument, in one of the journal’s essays, is that the Ur text for these discussions – Aristotle’s Poetics – explicitly does not exclude storytelling that makes a truth claim when it defines the principles of written art. Going further, I say that the telling of true stories can offer the most demanding test of those principles; demanding, and therefore exciting.

Ethics enters into the debate in multiple ways. I am not the first to argue that there can be an affinity between ‘doing the right thing’ and ‘doing it right’. For me, the search for fresh and precise language is a form of detailed reporting and verification and the impulse to avoid cliché has an ethical dimension. Truth and beauty do not have to be opposites.

In passing, as an illustration, I apply the argument to conspiracy narratives. The ethical grounds for criticising conspiracy thinking focus on its unfalsifiable resistance to reason. In Aristotle’s Poetics, reason serves artistic plausibility. Aristotle himself warns that ethics and aesthetics can come into conflict: in life as in art, people tend to prefer the ‘plausible but impossible to the implausible but possible’. But even when the priority is aesthetic, not ethical, the question of what makes something ‘plausible’ is fraught. Conspiracy narratives are saturated with what Aristotle calls ‘false enthymemes’, premises that do not support the conclusion; incomplete chains of logic; exaggeration; confusion of precedence with cause; and a tin ear for ‘relation, aspect and manner’.

There is another aesthetic basis for criticism. For writing that aspires to some sort of success, the Poetics says, it must also create ‘astonishment’ and this requires sophistication in the portrayal of plot and motive. The problem with conspiracy narratives from this point of view is that when they are looked at together as a genre, they are wholly predictable and implausible. If the conspiracy theory is a genre, it is a hoary potboiler.

Dreaming with my feet on the ground

A few days ago, I celebrated a personal triumph. On February 28, the registry office at University College London rolled out its latest monthly list of graduates and my PhD in Information Studies (Publishing) took full official effect. Meet Dr Greenberg: author, editor, scholar, teacher, and occasional blogger.

Although insignificant in the bigger scheme of things, the triumph counts as major news on this blog, started four years ago to help me lithify stray thoughts about a new scholarly identity.

The odd thing is that so far, I have posted hardly anything about the doctoral thesis. Some reflection about a previous life as journalist, and news about other projects; but when it came to the thesis itself, I felt protective. Until it was written, I was not entirely sure what it all meant. The final title, ‘The Hidden Art of Editing’, only emerged in the last few months.

Hopefully, the work will not remain hidden any longer. One book is already contracted with Peter Lang, a set of fascinating interviews with practitioners. Another is now in the proposal stage: a countervailing view of editing as a way of opening up the text, rather than closing it down.

There is a lot of mystique about doing a PhD. Often, that is not helpful. It is stubborn persistence and hard-headed planning that allows a student to come up with the goods, and steady, predictable support from the host institution. But there is something magical about the doctoral journey and its strange intensity.

Perhaps all major projects have this quality; the difference is that the doctoral thesis, at its best, must combine both imagination (the ‘original contribution to knowledge’) and solidity; the careful placement of stepping stones that allow the reader to retrace one’s steps.

In my case, the journey was taken later in life, alongside a full-time job. And by the nature of its subject matter, the research marked not just the start of a new professional life but the culmination of a previous one. On one level, there is a surprising constancy in the concerns pursued over the years. On another, my thinking has gone through a complete transformation.

Meanwhile, life happens. During the seven years since the start I began a new career, faced the end of a marriage, said goodbye to a parent and fought a life-threatening illness. It is not surprising that finishing became an act of defiance. It feels a big deal for the whole family too. Only a fraction of the extended family has a first degree, and I am only the second person with a doctorate; the first was won nearly 40 years ago.

Although personally I have met with only warm support and affirmation, our wider culture’s attitude to academic achievement is mixed, and sometimes contradictory. People demand qualifications from professionals as a marker for trust, but they are also sometimes attacked as ‘credentialism’. When a policy advisor was found to have lied about having a PhD, participants in the public debate were obliged to offer a basic defence of why that mattered.

For me, the PhD was never just about the qualification. It was about making discoveries that could be recognised by others; joining a conversation that might survive the tests of time.

It also feels valuable for its own sake. The last year of doctoral work, during a wonderful period of leave, provided dreamy freedom, perhaps the first since those long exam-less summers of girlhood. Not dreaming as in ‘ivory tower’, but dreaming as discovery. After a lifetime of short-term demands I was able to think things through; things that really mattered to me. The kind of dreaming that puts your feet on firmer ground.

The kind of dreaming that changes everything.

Fighting on two fronts

I came across an interesting debate last week about whether the digital humanities (DH) could be deemed responsible for a number of unpleasant trends in higher education, because of its supposed pro-industry bias. Which involved, in turn, a debate about definitions of the digital humanities.

It is a recurring debate, but the latest round started with a blog post by Daniel Allington, which was answered by Stephen Ramsay. This led to more interesting posts and links, such as this and this, plus a lively discussion in the comments thread of the original post.

I do not know all the ins and outs of the debate, nor the people involved –I take a strong interest in DH but my own work is currently at one remove. However I recognise some patterns in the argument, which it seems helpful to share. Although possibly foolhardy, given the strength of feeling; so I apologise in advance for any errors or misunderstandings.

The main pattern is a tension between disciplines that can be described as practice-led, and those who perceive themselves as the champions of ‘theory’. I had to cut my teeth on this one around eight years ago, when first changing careers.

I discovered that on top of the usual snobberies about tomorrow’s fish and chips, journalism had difficulty cutting it as a university subject if it took practice seriously because it was deemed to lack a theoretical framework, and because practitioners were perceived as ciphers representing industry interests. This perception framed discussion about ‘skills’, which were often belittled in importance and regarded suspiciously as the thin end of an industry wedge.

Trying to make sense of it all, I wrote a paper which attributed some of the tensions to the fact that Journalism Education’s institutional host – in the UK, most frequently Cultural Studies or related disciplines – had historically defined itself against journalism, setting itself the task of deconstructing practices and the tacit theories believed to lie behind them. In response…

practitioner-academics have often fought on two fronts, arguing with industry for more theoretical context and with academic colleagues for more practice-based content.

The paper also noted that while universities were understandably happy to profit from the popularity of practical media courses, the classroom experience would become a cynical exercise unless the interpretive framework could allow for a positive vision of the practice. On that occasion it was the ‘theory’ bods who were taking advantage of the subject’s popularity (because they had seniority – i.e. because they could) and not the ‘practitioners’.

I am myself in the happy circumstance of teaching in a creative writing programme. However, although literature attracts more respect than journalism, it turned out that creative writing as a discipline came in for more or less the same stick. Writers in the academy also find themselves fighting on two fronts, to make space for an approach that conceives practice ‘not as a branch of some other subject but as a thing in itself; not a corpus of knowledge, but a living experience.’*

The parallels with DH, a hands-on approach to scholarship that is also accused of being the thin end of the industry wedge, seem to be worth exploring.

As it happens, doing theory is a practice as well, with its own institutions and ethos suitable for analysis and interpretation, and its own fight for scare resources. And as is proper for critical engagement and debate, people have different ideas about what theory is. What is maddening is when a party in the debate recognises only one definition of theory as theory.

I hope to post more on such matters on another occasion, and limit further comment now to a particular contribution in the latest DH debate, which had me sitting bolt upright:

Defenders of the digital humanities might ask themselves, with a bit more commitment than seems evident in public discourse, where such unwelcome associations keep coming from — apart, that is, from an utterly fantasized pure and/or personal malice — if they are really so thoroughly and consistently mistaken.

Lack of commitment and unwarranted suspicions – strong accusations indeed. But the fantasy of malice seems to work both ways, because the comment only makes sense if one assumes bad faith on the part of the opponent. There is also something a little creepy about holding the party that is the subject of an attack responsible for that attack.

The danger here is of taking the ‘hermeneutics of suspicion’ to such an extreme that one ends up telling one’s opponent what she or he really means. Because then, the debate really does get stuck in an Escher drawing.

* Myers, D. G. (1994) ‘The Lesson of Creative Writing’s History’, AWP Chronicle 26 (February): 1, 12-14

Publishing and the university

A nervous morning today, braving the public for the first time at the London Book Fair. Every year there are dozens of fascinating talks, panels and seminars. Last year, I noticed that although the place was swarming with lecturers and students, there didn’t seem to be any talks specifically about the university and its place in publishing. So I proposed one – it seemed like a good idea at the time.

The workshop panel will introduce some examples of innovation in this field, and put it into context. Then participants will have a chance to talk together and respond to the question, ‘How could a university help you?’; and for those already based at a university, ‘What would you want to offer?’ to outside organisations, either by way of research or practice. We were also curious what people thought about the new generation of university imprints, and how they might distinguish themselves. We will collate the responses and disseminate them. The hashtags are #HEpublish and of course, #lbf13.

The panel includes a colleague who is starting up a new imprint, Fincham Press, at the University of Roehampton Department of English and Creative Writing, a colleague from UCL’s MA Publishing programme, and a researcher at UCL who is running the open access Ubiquity Press. The workshop is supported by the National Association of Writers in Education (NAWE).

My own short presentation considers the invisible support that universities provide to publishing and peer networks; recaps on the role of universities in book history; and takes some examples from the classroom that illustrate how a writing degree can help look at a manuscript from the inside out.

Creativity and the academy

Updated March 7

The Times Higher Education magazine has quoted my colleague Dr Louise Tondeur in an article, and has mentioned the conference she is organising this April for people interested in the practice-led disciplines in higher education.

The conference is being held at the University of Roehampton April 11 to 12, under the auspices of ReWrite, the Centre for Research in Creative and Professional Writing. The title is ‘Practice, Process and Paradox: Creativity and the Academy’.

Tickets can be booked via the university shop. Here is a downloadable A4 poster

To declare an interest, I will be among the contributors, talking about ‘The Poetics of Editing’.